A few days ago, I was chatting with my friend Jan. We were talking about the latest piece of news: yet another service we use regularly had just announced its new MCP server.

For those who don’t live inside these acronyms, MCP stands for Model Context Protocol. In very simple terms, it is a way for an AI system to interact with software or a service without going through the traditional graphical interface. It does not click buttons, open menus, or fill out forms the way a human would. It communicates directly with the service through a textual, structured interface designed for machines.

A kind of service entrance for AI.

At some point, Jan said something that was obvious in hindsight: “Everything is becoming a plugin for AI.”

It was obvious. And precisely because it was obvious, I had missed it.

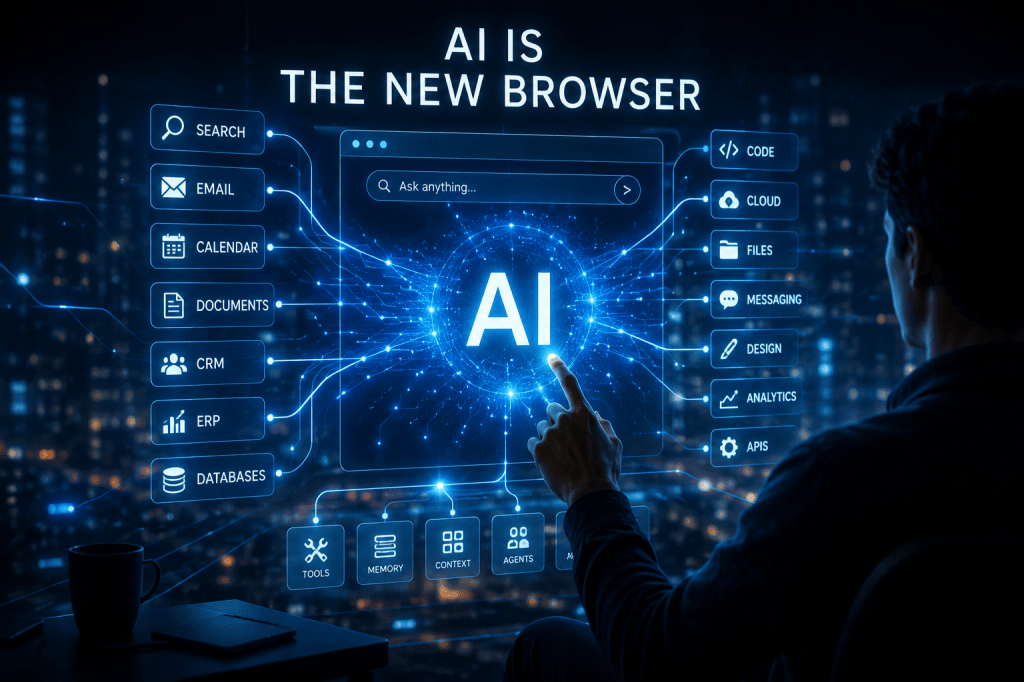

Every day, a larger portion of the digital world we use — search engines, databases, productivity tools, CRMs, ERPs, code repositories, document systems, cloud services — becomes accessible not only to humans, but also to AI systems. Not through screens, windows, and buttons, but through protocols, APIs, tools, connectors, and MCP servers.

The consequence is enormous: the AI client is becoming the new browser.

Not necessarily “the browser” in the technical sense. Chrome, Safari, Edge, and Firefox will continue to exist. But the browser’s most important historical role was to be the universal access point to the digital world. You opened the browser, searched for something, landed on a site, used an application, bought something, wrote something, worked, studied.

Now, more and more often, we open ChatGPT, Claude, Gemini, Perplexity, or another AI client and ask directly for the outcome.

We no longer want to “go to a website.” We want to know, decide, book, compare, generate, fix, buy, summarize, automate.

The web was a network of pages.

The cloud became a network of applications.

AI is turning everything into a network of capabilities.

From Browsing to Asking

Over the last thirty years, we learned how to “browse.” It is an interesting metaphor. Browsing means moving through an uncertain sea. Open Google, type a question, get ten blue links, choose a result, open a page, go back, compare, click again.

It was a cognitive activity. But it was also work.

Over time, that work became less visible. First Google started answering some questions directly. Then came knowledge panels, snippets, maps, rich results, and featured answers. Finally came AI-generated summaries.

User behavior changed accordingly. Many people no longer search for “the right page.” They search directly for “the answer.” And when an AI client gives an answer that is good enough, there is often no reason to open ten websites.

One of my closest collaborators told me just yesterday: “How do you even use Google anymore? I haven’t done a traditional search in months.”

It was a joke, but not entirely.

The point is not that Google will disappear. That would be a naive prediction. The point is that the cognitive function of the search engine is moving somewhere else. We used to ask Google where to go. Now we ask AI what to do.

That is a massive difference.

Google organized access to information.

AI organizes access to action.

The Browser Was the Operating System of the Cloud

To understand what is happening, we need to look back.

When the world moved from the desktop to the cloud, the browser became central. At first, it was just a program for reading web pages. Then it became the gateway to email, documents, business applications, collaboration, accounting, project management, CRM, code, and analytics.

At some point, the browser became so important that entire computers were built almost entirely around it, like Chromebooks. And many of the mobile apps we use every day are, in practice, web applications wrapped inside a native container.

The browser won because it offered a powerful promise: install nothing, access everything.

Today, the AI client offers an even more radical promise: learn nothing, ask everything.

Of course, that sentence is deliberately provocative. It does not mean we will no longer need to learn. Quite the opposite. We will probably need to learn better, because people who do not understand the world will ask worse questions and get worse answers. But for many operational tasks, the learning curve will change dramatically.

I will no longer need to know where a function is inside a CRM.

I will no longer need to remember which menu to use in an ERP.

I will no longer need to know the search syntax of a document archive.

I will no longer need to open five applications to complete one process.

I will tell my AI client what I want to achieve. And the client will use the available tools.

At that point, the graphical interface stops being the center of the digital experience. It becomes one of several possible surfaces. Still useful for verifying, correcting, exploring. But no longer necessarily the main place where work happens.

MCP First

This transformation has deep implications for software companies.

For twenty years, we said: “UX first.” And we were right. In a world where humans interact directly with software, the quality of the interface determines adoption. Powerful software that is hard to use loses to software that may be less complete, but is smoother, clearer, and more pleasant.

But if the primary interlocutor of software becomes an AI agent, the priority changes.

UX does not disappear. Thinking that would be a mistake. But a new priority emerges: MCP first, or more generally, agent first.

It means designing applications that are easy for AI systems to use before they are easy for humans to use. It means exposing capabilities in a way that is clear, secure, composable, observable. It means describing available actions, constraints, permissions, side effects, required data, and possible errors extremely well.

In a world dominated by agents, the new UX is the quality of the machine interface.

It is not only about how beautiful a button looks. It is about how understandable and reliable the action behind that button is when invoked by an AI system.

This may sound like a technical detail. It is not. It is a paradigm shift.

When the browser became central, companies had to rethink their applications for the web. When mobile became central, they had to rethink them for small screens, touch, notifications, and sensors. Now they will have to rethink them for autonomous or semi-autonomous agents that read, reason, call tools, and compose workflows.

The software of the future will not merely be used. It will be mediated.

The End of Interface Loyalty

There is another implication, and it may be even more disruptive: switching vendors could become much less painful.

Today, many companies remain locked into software not because it is the best, but because users have learned how to use it. They have memorized screens, processes, tricks, shortcuts, and odd behaviors. Changing systems means training thousands of people, rewriting manuals, dealing with resistance, and losing productivity.

But what happens if users no longer interact directly with the system?

If my AI client uses one service rather than another to search the internet, do I really care which one it is? If the result is good, fast, and reliable, the underlying engine becomes almost invisible.

The same logic can apply to enterprise software: CRM, Office suites, CAD, ERP, HR systems, procurement platforms, business intelligence tools. The user may no longer know, or care, which application is being used behind the scenes to complete a task.

This reduces resistance to change. But it also reduces the historical power of UI as a competitive moat.

In the previous world, an application defended its market partly through user habit. In the agentic world, the habit moves from the specific software to the AI client. The user is no longer loyal to the business application. The user is loyal to the assistant that helps them get work done.

This should make many software companies uncomfortable.

The risk is not only being replaced by a better competitor. The risk is becoming an interchangeable backend.

Software Becomes Organs, AI Becomes the Nervous System

We can imagine the digital enterprise as a body.

For years, we built specialized organs. The CRM managed customers. The ERP managed business processes. The document system preserved knowledge. Email carried messages. CAD designed objects. BI analyzed data.

Each organ had its own interface, its own language, its own way of being used.

Now something different is emerging: a central nervous system that connects these organs and allows users to express intentions instead of executing procedures.

“Prepare a customer brief before my call.”

“Find the latest anomalies in the orders.”

“Compare these two contracts.”

“Open an opportunity in the CRM and link it to yesterday’s email.”

“Generate a sales proposal using the latest price list.”

“Check whether this CAD component is compatible with the bill of materials.”

In these examples, the user is not using an application. The user is expressing an intention. AI translates that intention into actions across multiple systems.

That is the real revolution.

It is not the chat.

It is not the language model.

It is not prompt engineering.

It is the shift from the interface as the place of control to intention as the unit of work.

Messaging Apps Become AI Clients Too

In this scenario, messaging applications will play a fundamental role.

Why? Because they are already the place where many people express intentions.

We message a coworker: “Can you send me the document?”

We message a group: “What’s the status of the project?”

We message a vendor: “Can you update the quote?”

We message ourselves: “Remind me to check this later.”

Messaging is already a primitive form of orchestration. The only difference is that today, there is usually another human on the other side. Tomorrow, there may be an agent.

Services like OpenClaw, remote usage of Claude Code, and agents integrated into Slack, Teams, or WhatsApp point in a clear direction: chat will not be only a communication channel, but a command surface.

In a sense, messaging will become a simplified version of the AI client. Less rich, perhaps less powerful, but everywhere. We will not always need to open a dedicated application. Sometimes, sending a message will be enough.

The future of human-computer interaction may look less like a control panel and more like an ongoing conversation.

The Model Is No Longer Enough: Enter the Harness

The technical center of gravity is also shifting.

Until recently, much of the AI conversation revolved around the model. Which LLM is better? GPT, Claude, Gemini, Llama, Mistral? How many parameters does it have? What is the cost per token? How large is the context window? Which benchmark does it win?

Then there was the API to call it. And a UI to chat with it.

That view is becoming insufficient.

More and more often, the real value is not only in the model, but in everything around it. A concept is emerging that we will hear more and more often: the harness.

The harness is the operational runtime of the LLM. It is the software infrastructure that wraps around the model or agent and manages context, memory, tools, policies, security, information retrieval, planning, observability, evaluation, retries, fallbacks, and error handling.

It is not simply the UI.

It is not simply the AI client.

It is not simply the model.

It is what allows the model to work reliably in the real world.

An LLM by itself is like an engine on a test bench. It may be extremely powerful, but it is not yet a car. It needs an electronic control unit, chassis, sensors, brakes, transmission, safety systems, and diagnostics.

Or, if you prefer a musical metaphor: the model is the set of instruments. The harness is the conductor, the score, the acoustics of the hall, and the discipline of rehearsal.

This is where a major part of the competition will happen.

Two companies can use the same foundation model and get very different results. One has a better harness: richer context, better tools, more useful memory, stronger guardrails, more rigorous evaluations, deeper integrations.

In the AI-native world, the question will not only be “Which model do you use?” It will be: “What system have you built around the model?”

The New Battle for the Access Point

Every digital era has had its dominant access point.

In the PC era, it was the operating system.

In the web era, it was the browser.

In the mobile era, it was the app store.

In the cloud era, it was the combination of browser, identity, and SaaS.

In the AI era, it may be the agentic client.

Whoever controls the access point controls much more than an interface. They control the distribution of attention. They decide which services are easy to use, which ones are suggested, which ones are ignored, and which ones become defaults.

That makes the strategic battle enormous.

If the AI client becomes the new browser, then model providers, operating systems, cloud vendors, enterprise platforms, and messaging applications will all enter the same arena. Each will want to be the place where the user starts in order to do anything.

The browser took us into the web.

The AI client may bring the digital world to us.

But Be Careful: Less Interface Does Not Mean Less Power

There is a risk, though.

When an interface disappears, our perception of the power it exercises often disappears with it.

If I open a website, I can at least partly see where I am. I see the brand, the page, the layout, the sources, the steps. I can compare, go back, open another tab. I may not always do it, but I can.

If, instead, I ask an AI system and receive a single elegant, concise, actionable answer, I may forget how many invisible decisions were made before that answer reached me.

Which source was consulted?

Which service was selected?

Which data was excluded?

Which action was preferred?

Which constraint was interpreted?

Which commercial interest influenced the result?

The browser showed us the chaos of the web. AI tends to hide it behind a coherent answer.

That is convenient. But it is also dangerous.

Because the interface does not really disappear. It moves deeper into the stack. It becomes ranking, policy, memory, context, orchestration, tool selection, and implicit priorities.

In the old world, we had to ask: “Can I trust this website?”

In the new world, we will have to ask: “Can I trust the entire chain that produced this answer?”

Conclusion: We Are Not Changing Tools. We Are Changing Posture.

Saying that “AI is the new browser” does not mean the browser will die tomorrow. Technologies rarely die theatrically. More often, they change roles.

Radio did not disappear with television.

Movies did not disappear with streaming.

The desktop did not disappear with the cloud.

The browser will not disappear with AI.

But the center of the digital experience can move.

And when the center moves, everything else gets redesigned: products, companies, skills, habits, business models, competitive power.

For users, this means less browsing and more delegation.

For companies, it means designing software that is legible and actionable by agents.

For vendors, it means competing to become the new universal access point.

For technologists, it means looking beyond the model and building reliable harnesses.

For everyone, it means understanding that the interface of the future may not be a screen, but a conversation.

The browser taught us to explore the digital world.

AI is teaching us to command it.

And as always in the history of technology, the real question is not only what we will be able to do with this new power.

The more important question is: who will decide for us when we stop clicking?

Thanks, Jan Morath, for inspiring me once again

Leave a comment